Brinker, recently named “Narrative Intelligence Solution of the Year 2026” by The Cyber Review, announced the official launch of its malicious intent-based deepfake detection capability, introducing a fundamentally new approach to combating AI-driven disinformation.

A report from the European law enforcement agency Europol suggests that by the end of 2026, up to 90% of online content could be manipulated. When almost everything is fake, the value of forensic deepfake detection becomes increasingly redundant.

Brinker’s new capability is built around a core metric, Malicious Intent Probability, designed to determine whether manipulated content is being used to harm a community, brand, or organization.

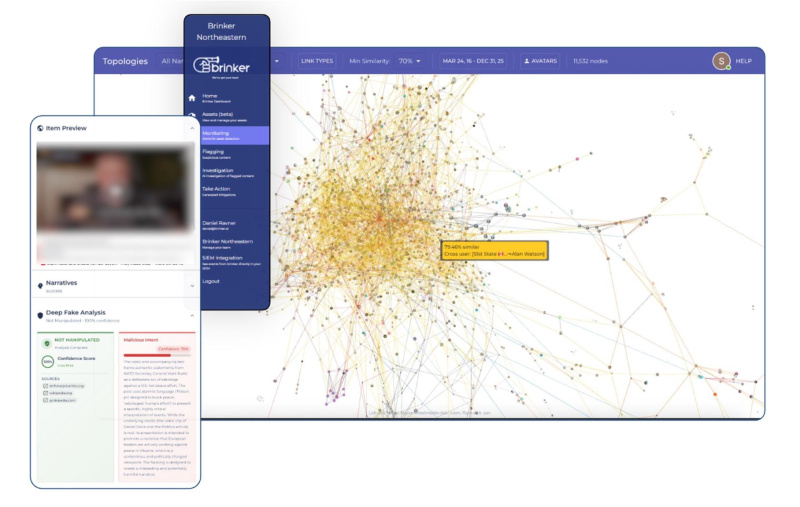

Unlike conventional tools that rely solely on digital image forensics, Brinker’s system evaluates content across key dimensions, including sentiment, alignment with risk scenarios, coherence, context, and corroboration of claims by reliable sources.

This analysis is integrated into Brinker’s broader platform, which maps narratives across platforms, languages, and time, enabling organizations to understand the full scope of coordinated influence campaigns. The malicious intent-based deepfake detection feature is now available as part of Brinker’s platform, supporting enterprises, defense organizations, and government agencies in proactively identifying and mitigating AI-driven disinformation threats.

The malicious intent-based deepfake detection feature is now available as part of Brinker’s platform, supporting enterprises, defense organizations, and government agencies in proactively identifying and mitigating AI-driven disinformation threats.

“Brinker is committed to becoming the most innovative intelligence platform in the world,” said Daniel Ravner, CEO of Brinker. “We do not build in a sandbox. We develop our capabilities alongside design partners and clients in real-world scenarios. By championing advancements in agentic OSINT, we enable organizations not only to identify disinformation, but also to act against it.”