Reiterating its commitment to online safety, this International Safer Internet Day, Snap Inc. has released new research showing that in 2023, parents’ found it more difficult to keep up with their teens online activities, and parents’ trust in their teens to act responsibly online faltered. This research was conducted across all devices and platforms – not just Snapchat.

The results are part of Snap’s ongoing research into Generation Z’s digital well-being and mark the second reading of our annual Digital Well-Being Index (DWBI), a measure of how teens (aged 13-17) and young adults (aged 18-24) are faring online in six countries: Australia, France, Germany, India, the UK, and the U.S. The latest findings show that globally, parents’ trust in their teens to act responsibly online fell in 2023, with only four in 10 (43%) agreeing with the statement, “I trust my child to act responsibly online and don’t feel the need to actively monitor them.” This comes in six percentage points down from 49% in similar research in 2022. In addition, fewer minor-aged teenagers (13-to-17-year-olds) said they were likely to seek help from a parent or trusted adult after they experienced an online risk, a drop of five percentage points to 59% from 64% in 2022. 50% of parents said they were unsure about the best ways to actively monitor their teen’s online activities.

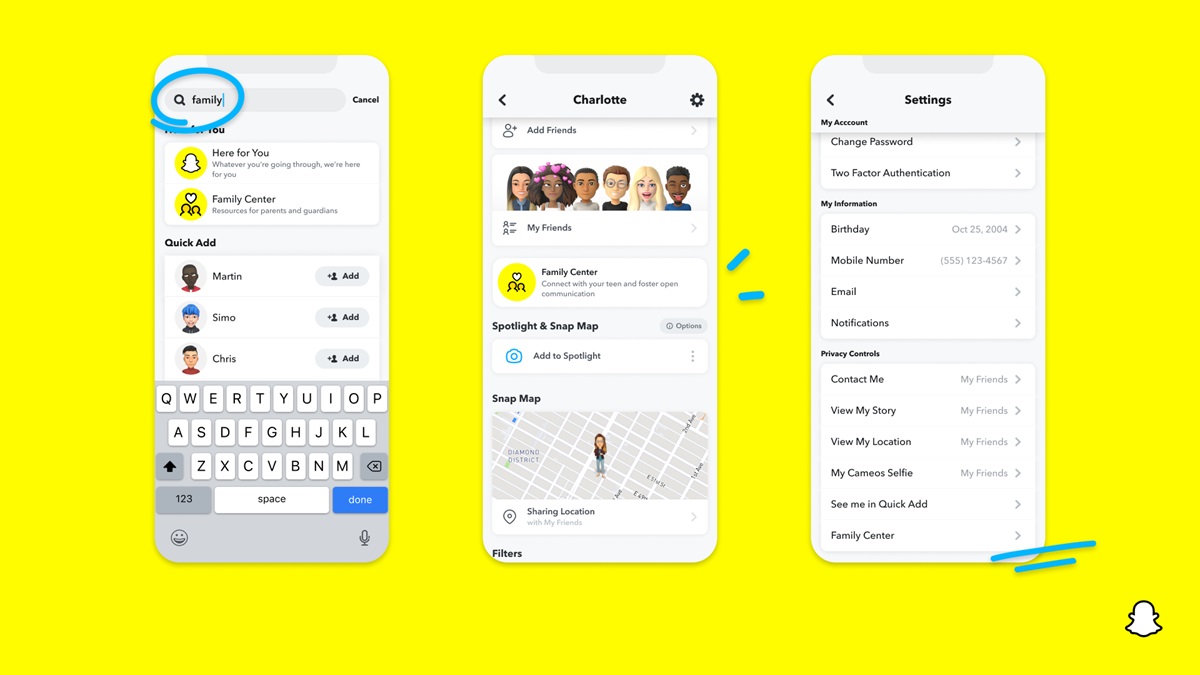

Snapchat has a unique and highly engaged audience in the MENA region, with over 90% of 13-34 year-olds active on the platform in KSA as well as reaching 1 in 3 of 18-34 year-olds in the UAE. With an active younger audience, Snap Inc. continues to leverage these and other research findings to help inform its product and feature design and development, including Snapchat’s Family Center. Launched in 2022, Family Center is a suite of parental tools, designed to provide parents and caregivers with insight into who their teens are messaging on Snapchat, while preserving teens’ privacy by not disclosing the actual content of those communications.

Reiterating its commitment to securing teen activities online, Snapchat continues to offer a host of additional safety features to protect young adults online. By default, teens have to be mutual friends before they can start communication with each other, and they aren’t able to have public profiles. Teens only show up as a “suggested friend” or in search results in limited instances, if they were to have mutual friends in common, making it harder for strangers to find their profiles.

Snapchat also offers confidential, quick and easy-to-use in-app reporting tools, so that Snapchatters can alert anything they see that violates the terms. The Snapchat global Trust and Safety teams work 24/7 to review reports, remove violating content and take appropriate action.

Designed to be brand safe and minimize the spread of harmful content, Snapchat also limits opportunities for potentially harmful content to ‘go viral’. All content on Spotlight and Discover is pre-moderated – by humans and computers, making it a safer experience.

To help remove accounts that market and promote age-inappropriate content, Snapchat introduced a new Strike System. Under this system, any inappropriate content detected proactively or is reported will be immediately removed. Learn more about the Strike System here.